That path to Ai: #8 Hacking Ai with Adv machine learning

Adversarial machine learning

Adversarial machine learning exploits how artificial intelligence algorithms work to disrupt the conduct of artificial intelligence algorithms. In the past years, adversarial machine learning has become an active zone of research as the job of AI continues to grow in vast numbers of the applications we use. There is rising concern that vulnerabilities in machine learning algorithms, it can be exploited for malicious attacks.

While there are numerous types of attacks and techniques to exploit machine learning systems, in general terms all attacks come down to either:

- Classification evasion: it is the most well-known type of attacks, where the cybercriminal seeks to conceal malicious content to bypass the algorithm's filters. (Figure 14)

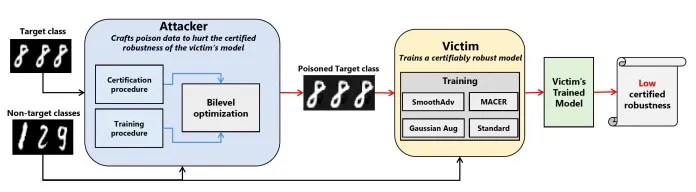

- Data poisoning: This type of attack is very sophisticated; the cybercriminal it tries to control the learning process by presenting fake data that compromises the algorithm's outputs. (Figure 15)

Bypassing machine learning with reinforcement learning

Reinforcement machine learning models are essential methods for building intelligent machines. In this type of learning, we use an agent that will analyse the environment. Then it selects and performs actions, these actions if they are right, the agent get a reward, the learning process keeps going, and it chooses the best decision based on a state and a reward function:

Deep reinforcement learning had a purpose as a framework for Atari games, by reinforcing agents that regularly surpass human execution. Among the key commitments of the deep reinforcement learning framework was its capacity, as in deep learning, and for the agent to acquire a worth ability from start to finish. By exploiting reinforcement learning through a progression of games, it played against the anti-malware system; the malware understands which successions of activities are probably going to bring about evading the anti-malware, or the other way around. The defender can mimic their procedure to bypass the evasive strategies of the malware, and so on.

The reinforcement algorithm is learning tricks to get as many rewards possible; this same methodology did work to bypass the anti-malware system.